400GBASE-SR16

800 Gb/s

# lanes

16

10

8

4

2

1

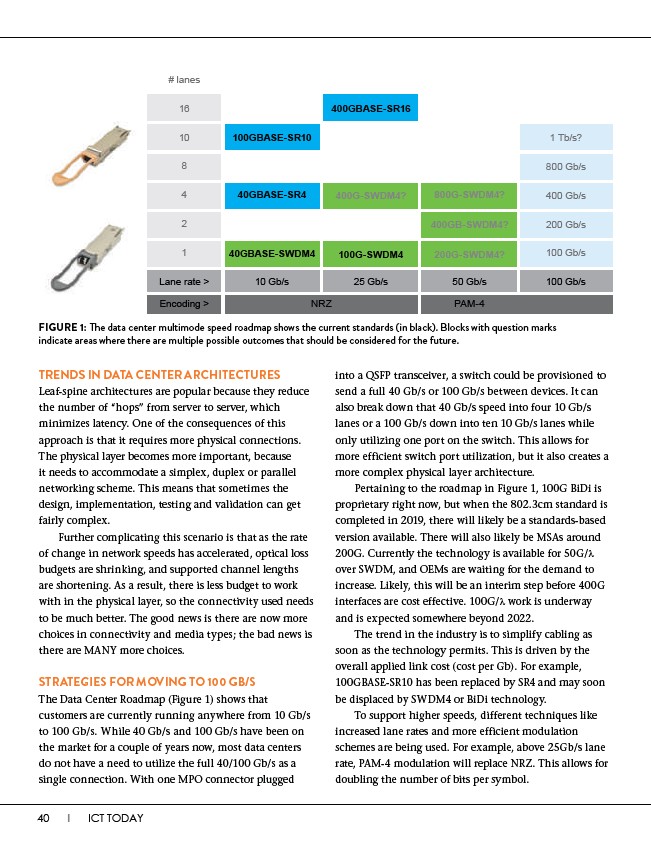

FIGURE 1: The data center multimode speed roadmap shows the current standards (in black). Blocks with question marks

indicate areas where there are multiple possible outcomes that should be considered for the future.

TRENDS IN DATA CENTER ARCHITECTURES

Leaf-spine architectures are popular because they reduce

the number of “hops” from server to server, which

minimizes latency. One of the consequences of this

approach is that it requires more physical connections.

The physical layer becomes more important, because

it needs to accommodate a simplex, duplex or parallel

networking scheme. This means that sometimes the

design, implementation, testing and validation can get

fairly complex.

Further complicating this scenario is that as the rate

of change in network speeds has accelerated, optical loss

budgets are shrinking, and supported channel lengths

are shortening. As a result, there is less budget to work

with in the physical layer, so the connectivity used needs

to be much better. The good news is there are now more

choices in connectivity and media types; the bad news is

there are MANY more choices.

STRATEGIES FOR MOVING TO 100 GB/S

The Data Center Roadmap (Figure 1) shows that

customers are currently running anywhere from 10 Gb/s

to 100 Gb/s. While 40 Gb/s and 100 Gb/s have been on

the market for a couple of years now, most data centers

do not have a need to utilize the full 40/100 Gb/s as a

single connection. With one MPO connector plugged

40 I ICT TODAY

into a QSFP transceiver, a switch could be provisioned to

send a full 40 Gb/s or 100 Gb/s between devices. It can

also break down that 40 Gb/s speed into four 10 Gb/s

lanes or a 100 Gb/s down into ten 10 Gb/s lanes while

only utilizing one port on the switch. This allows for

more efficient switch port utilization, but it also creates a

more complex physical layer architecture.

Pertaining to the roadmap in Figure 1, 100G BiDi is

proprietary right now, but when the 802.3cm standard is

completed in 2019, there will likely be a standards-based

version available. There will also likely be MSAs around

200G. Currently the technology is available for 50G/��

over SWDM, and OEMs are waiting for the demand to

increase. Likely, this will be an interim step before 400G

interfaces are cost effective. 100G/����work is underway

and is expected somewhere beyond 2022.

The trend in the industry is to simplify cabling as

soon as the technology permits. This is driven by the

overall applied link cost (cost per Gb). For example,

100GBASE-SR10 has been replaced by SR4 and may soon

be displaced by SWDM4 or BiDi technology.

To support higher speeds, different techniques like

increased lane rates and more efficient modulation

schemes are being used. For example, above 25Gb/s lane

rate, PAM-4 modulation will replace NRZ. This allows for

doubling the number of bits per symbol.

100GBASE-SR10

40GBASE-SR4

40GBASE-SWDM4

400G-SWDM4?

100G-SWDM4

800G-SWDM4?

400GB-SWDM4?

200G-SWDM4?

1 Tb/s?

400 Gb/s

200 Gb/s

100 Gb/s

Lane rate > 10 Gb/s

25 Gb/s 50 Gb/s 100 Gb/s

Encoding > NRZ PAM-4